On March 31, 2026, software engineer Chaofan Shou discovered that Anthropic’s Claude Code source code was unintentionally exposed through a source map file included in its npm package. This marks the second time in under 15 months that the tool’s source has been leaked in the same way.

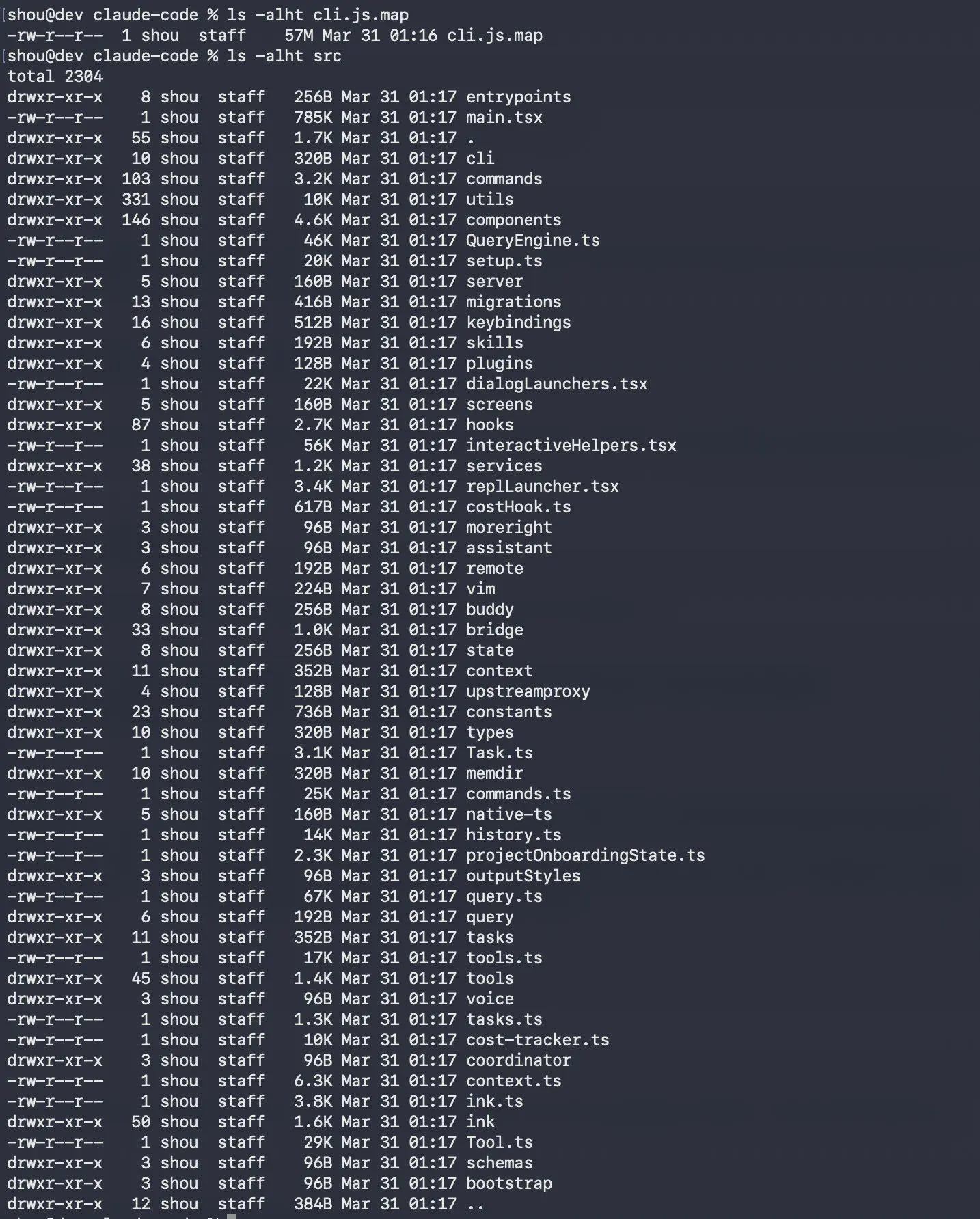

The exposed codebase contains roughly 1,900 TypeScript files and over 500,000 lines of code, effectively revealing the full architecture of Anthropic’s AI-powered developer CLI.

Within hours, the code was mirrored across public GitHub repositories, where it quickly gained traction among developers and researchers analyzing the system.

How the Leak Happened

The exposure was caused by source map files being accidentally included in the npm package. These files are used for debugging and map compiled code back to its original source. In this case, they effectively shipped the full readable codebase to anyone who installed the package.

Screenshot showing exposed source map and extracted source files from the Claude Code npm package.

What the Code Reveals

The leaked code shows that Claude Code is a production-grade system built on the Bun runtime, using React with the Ink library for terminal UI rendering.

The tool includes:

- ~40 built-in tools for file operations, shell execution, web requests, and LSP integration

- ~50 slash commands for Git workflows, code review, and memory management

- A large query engine (~46,000 lines) handling LLM calls, streaming, and caching

- A multi-agent system capable of spawning parallel “sub-agents”

- An IDE bridge connecting the CLI to VS Code and JetBrains via JWT authentication

- A persistent memory system storing user and project context

The architecture also uses strict permission-gated tooling, schema validation via Zod, and lazy-loaded dependencies to balance performance and capability.

Repeat Incident

This is not the first time Claude Code has been exposed through source maps. A similar incident occurred in February 2025, suggesting the underlying issue was not fully addressed.

The repeat nature of the leak points to weaknesses in build pipeline controls and release validation processes.

Broader Security Context

The exposure comes during a period of broader security issues at Anthropic. In March 2026, reports revealed that internal files, including details about unreleased models, were also exposed due to a misconfigured CMS.

While unrelated technically, the incidents highlight ongoing operational security challenges.

Root Cause

The issue likely stems from misconfigured npm publishing settings, such as improper .npmignore rules or package configuration.

Source maps are useful in development but should never be included in production builds. Preventing this is typically handled through automated checks in mature DevOps pipelines.

Why This Matters

While the leak does not expose model weights or training data, it gives competitors deep insight into how Anthropic builds its developer tools.

This includes architecture decisions around:

- Multi-agent orchestration

- Permission systems

- IDE integrations

- LLM response handling

For competitors, this significantly lowers the barrier to building similar systems.

Security Implications

The exposed code also reveals internal permission boundaries and system behavior, which could help attackers identify weaknesses or bypass techniques.

Previous reports have shown that AI coding tools can be abused in targeted attacks, making this level of visibility particularly sensitive.

Response

Anthropic has not publicly commented on the incident at the time of writing. While the source maps have likely been removed, the code is now permanently archived across multiple repositories.

The repeated nature of this issue suggests that stronger release safeguards may still be needed.

Industry Takeaway

This incident highlights both the complexity of modern AI developer tools and the importance of secure build and release processes.

For developers, it offers a rare look at production-grade architecture. For organizations, it serves as a reminder that even small configuration mistakes can lead to large-scale exposure.