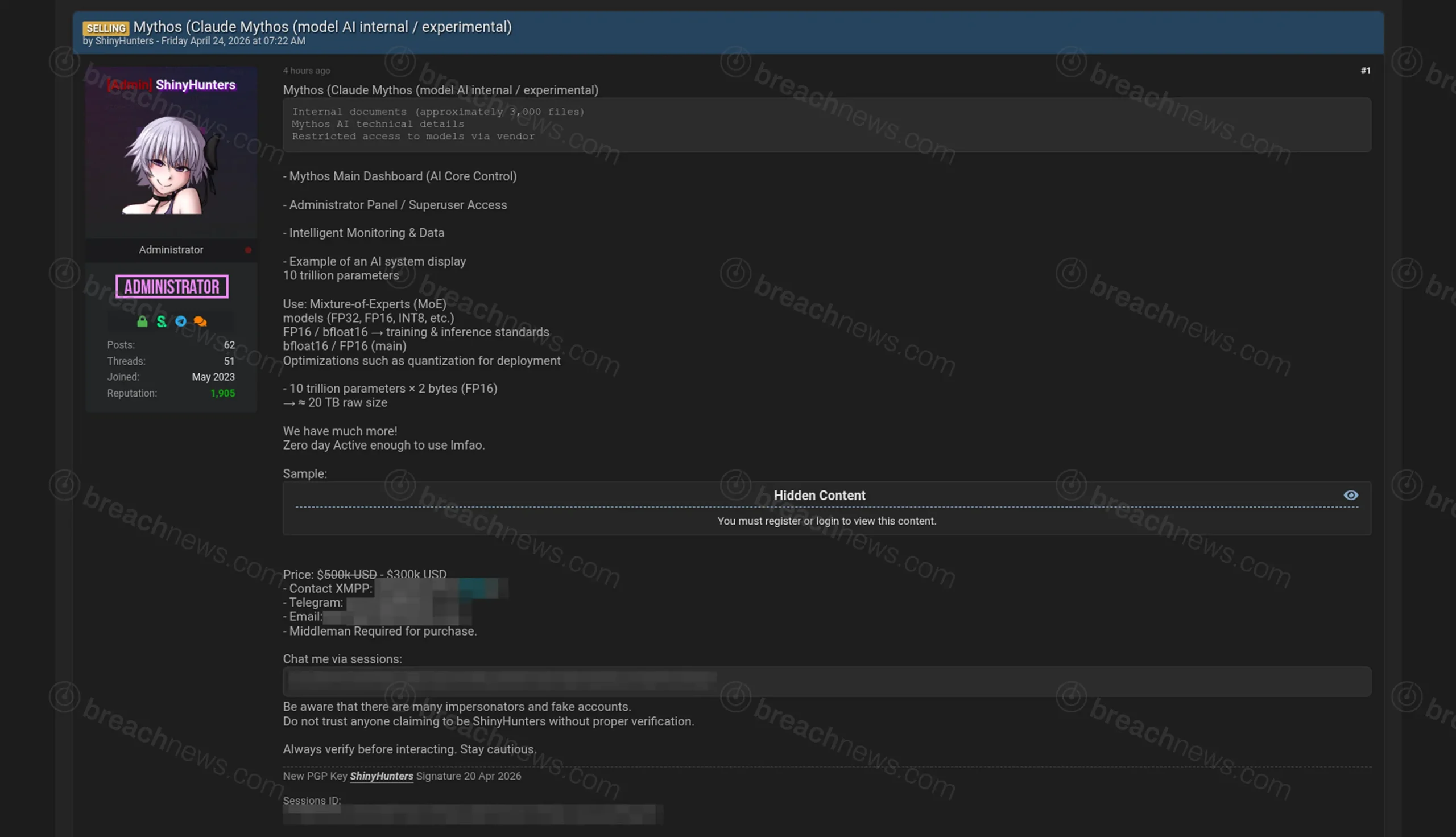

The threat actor group ShinyHunters is claiming to sell what it describes as internal data tied to an experimental Anthropic AI model referred to as “Claude Mythos,” including thousands of documents, technical specifications, and purported access to restricted systems. The listing, posted April 24, 2026, has not been independently verified, and Anthropic had not issued any public statement at time of publication.

Threat actor claims access to internal AI model data and systems

According to the listing, the dataset allegedly includes approximately 3,000 internal documents along with technical details related to model architecture, training configurations, and deployment environments. The actor also claims access to administrative panels and monitoring systems tied to the AI platform, suggesting visibility into internal operations.

The post describes what it calls a “core control dashboard,” alongside references to superuser-level access and system monitoring interfaces. These claims, if accurate, would indicate exposure beyond static data and into operational systems, though no supporting evidence has been publicly validated.

Model specifications raise questions about accuracy of claims

ShinyHunters describes the alleged model as having 10 trillion parameters using a mixture-of-experts architecture, with an estimated raw size of approximately 20 terabytes when stored in standard precision formats. While large-scale models using these techniques do exist, the scale and framing of the claims raise questions about their accuracy and whether the data represents a production system, an experimental project, or potentially exaggerated figures.

Threat actors frequently inflate technical specifications in sale listings to increase perceived value, particularly in cases involving intellectual property or emerging technologies such as artificial intelligence.

Listing includes alleged zero-day and restricted model access

The actor further claims that the package includes a zero-day vulnerability and restricted access pathways to the model via vendor systems. No technical details or proof-of-concept information has been provided to substantiate these claims, and it remains unclear whether any vulnerability exists or whether the statement is part of standard extortion or sales language.

The listing advertises the dataset for sale in the range of $300,000 to $500,000, positioning it as a high-value asset tied to proprietary AI development.

Follows broader ShinyHunters campaign targeting corporate data

The alleged sale comes amid a broader wave of activity from ShinyHunters involving multiple organizations across industries. BreachNews recently reported on a series of claimed data releases impacting companies including Zara, 7-Eleven, and Carnival, where the group asserted that datasets were published following failed negotiations.

ShinyHunters has historically operated on a data exfiltration and extortion model, often combining breach claims with sale listings or public leaks. A detailed overview of the group’s activity is available in the BreachNews ShinyHunters threat actor profile.

AI intellectual property emerges as high-value target

If legitimate, the exposure of internal AI model data could carry significant implications beyond traditional data breaches. Proprietary model architectures, training methods, and system designs represent core intellectual property for AI companies and can provide competitive advantages in model performance and deployment.

Unlike customer data breaches, incidents involving AI systems may also introduce risks related to model replication, misuse, or unauthorized access to advanced capabilities, depending on the nature of the systems involved.

No confirmation from Anthropic as claims remain unverified

At this stage, there has been no confirmation from Anthropic regarding the alleged breach or the authenticity of the materials described. As with similar listings, the claims are based solely on threat actor statements without independent verification.

Further developments may emerge if the data is publicly released, independently analyzed, or addressed by the organization.